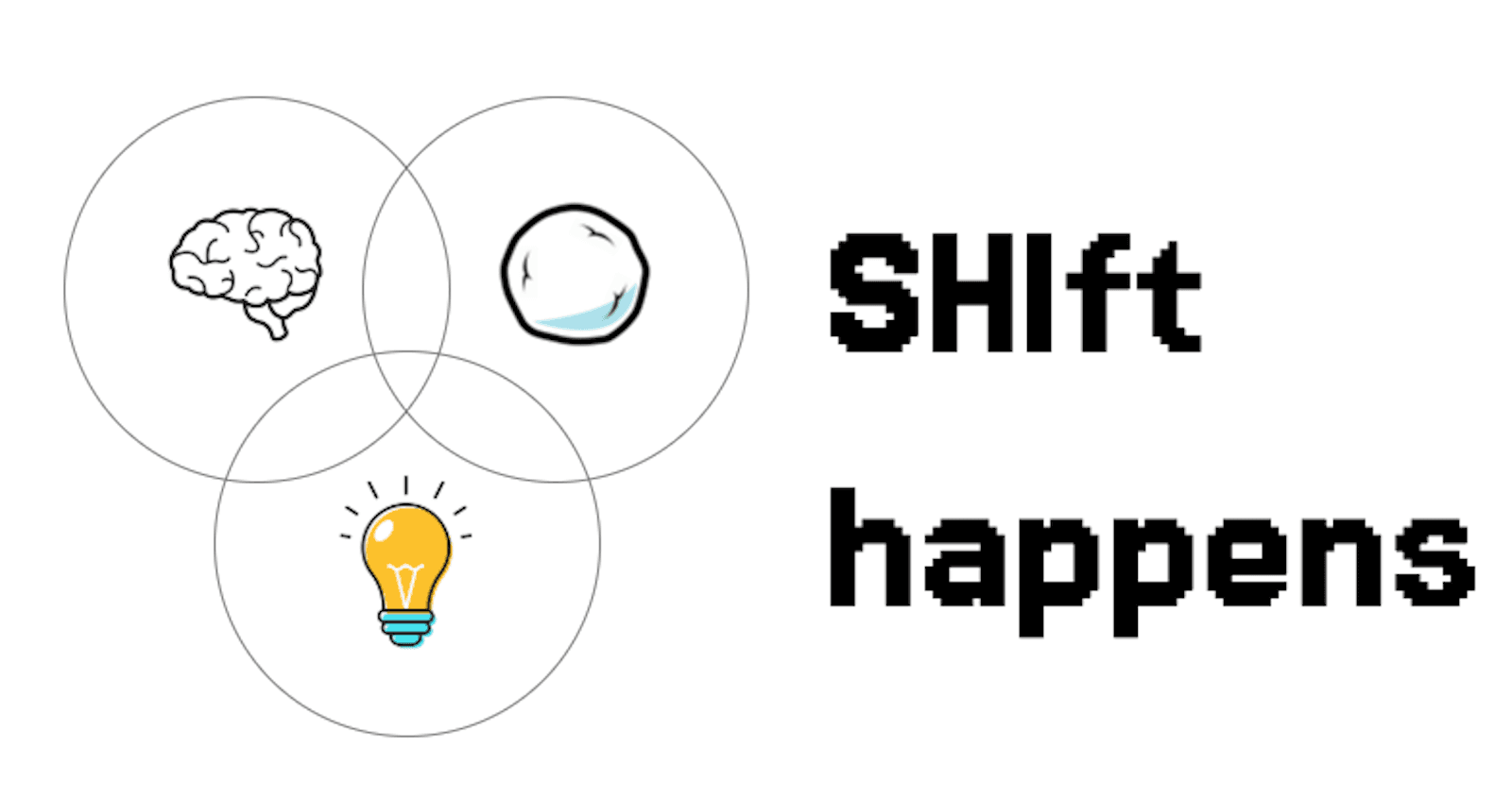

SHIft happens!

My take on the nature of change

I’ve recently stumbled upon one of the “it’s worse than we thought” posts on jobs’ crisis propagated by the advent of ML/AI. It’s reasonable and has some great ideas.

But do I want to talk about it? Sure, although that’s too painful of a wound

What gives?

In this post I try to explore why “SHIft happens” - when Snowballing changes, Human tendencies, and Inflection points collide.

A quote caught my attention:

It’s here, right now. It just doesn’t look quite like many expected it to.

So, the change turns out not as expected. Why is that so? Why do our expectation → inference machines (wrote on it here) give way on predicting anything but the amount of Cheetos we’re gonna pull out the pack?

I’d reckon two reasons are at play:

Exponential error accumulation

A la LeCun’s point about LLMs’ doom from his JEPA architecture talk (covered here):

The nature of change differing from our cognitive intuition

Here’s where I’d want to dive into my labyrinthine conceptual web… I’ll leave you to guess which one it may actually be (Source: the legendary NASA spider experiment, pdf here):

So… When sci-fi authors predicted (and still do!) the future, they were often wrong on the mundane of our everyday lives in year X - e.g. whether we’ll have seamless face identification, overarching voice interfaces, the amount of advertisement, the availability of interstellar travel and energy, etc.

Why would that be? The obvious answer is ‘predicting even macro-events and trends in such a complex world is a feat not many achieved, wiseass’, I know… However, I had that urge to build my own intuitions, and flesh them out more clearly.

The framework

Tackling the example of biopsychosocial model, I’d offer three interconnected time- and causescapes (just coined that, y’know - the broad landscape of cause-and-effect events and paths):

Snowballing, indiscernible changes

Have you ever witnessed a glacier move? Apart from timelapse videos. Me neither - yet it’s there, all the time!

In my opinion, we often underestimate the compounding effect of the smallest events - someone got featured on TikTok main page, your brainy friend had started an MIT student blog - kinda like the Butterfly effect, but less dramatic.

I’d put Annales’ school’s history view as an influence, along with recently found term cliodynamics. The Annales + cliodynamics combo distinguishes itself through three frameworks:

three timescapes: short events, conjectures/cycles, durable trends (longue duree)

apart from timescapes, geoeconomical snapshots and human mentalities should be taken into account (we’ll touch that briefly, too)

mathematical modeling, or usign something akin to stock-and-flow diagrams, as per cliodynamics - as needed

The combination of the first two helps explain how history quietly snowballs: we tend to overestimate the impact of discrete events while underestimating compounding trends. And sure, it does have a name and a close cognitive bias already ➡️ Amara’s Law (coined by Roy Amara), or temporal discounting bias…

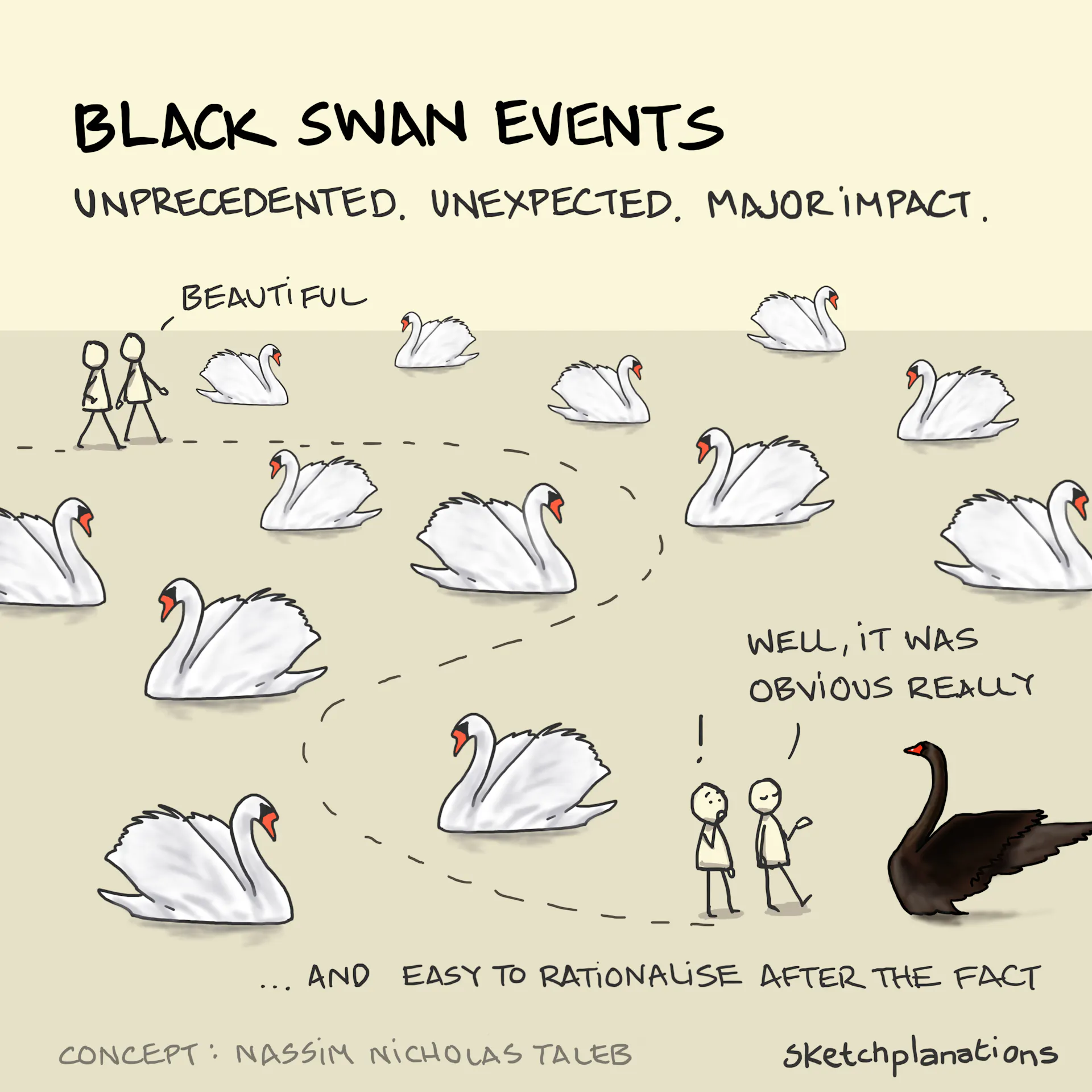

Inflection points (and ⌐🦢)

As per Nassim Taleb (unpredictable events) and Thomas Kuhn (scientific revolutions), history is not only shaped by gradual movement - sudden inflection points take place:

Definitely, progress accumulates quantitatively (e.g. CPU manufacturing) - sometimes interspersed with micro-explosions of paradigm shifts; those could look like black swans to most but the scientific circles/grantmakers/investors.

When ‘progress’, and subsequently, ‘problems’/anomalies accumulate (akin to a hedonic treadmill for progress - society ‘adapts’ to the good/progress and ‘demands’ more) - paradigm shifts sometimes happen:

Human nature/tendencies

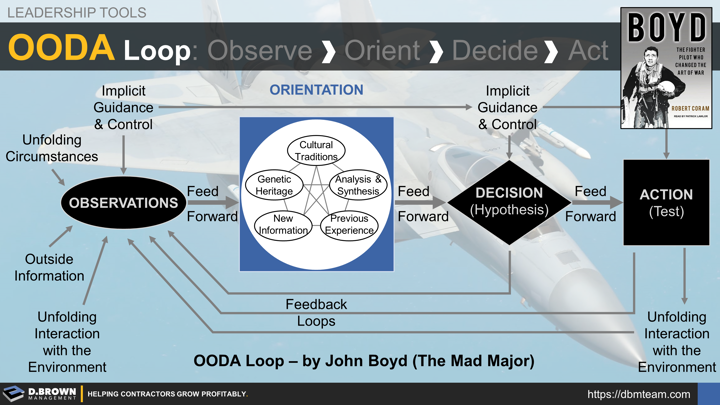

OODA (Observe-Orient-Decide-Act), predictable irrationality (Dan Ariely), human needs - it all goes there. Some theses:

Every human is a

sometimesirrational agent, as per Thucydides acting out of fear or self-interest (I’d doubt and expand that in [3]They act in an ~OODA loop based on circumstances, events, social and mental conditioning, etc.

They also act in varying interests (selfish, altruistic), based on both OODA and basic human needs. As per a nice list (not Maslow) here:

Certainty and variety

Significance and Contribution

Growth/Development and Love/Unity

I’d also add basic needs, e.g. sexual desire (sex sells) etc. Another comprehensive list of 30 is here. + the GOAT’s (Charlie Munger) list is here, under Human Misjudgment:

- Again, the interests would largely be shaped by current attention scope. I.e. anything we’re not paying close attention to (which is too easy in today’s information Day D-style bombardment) moves asynchronously but relentlessly. That may both come as a surprise AND not (or actually may indirectly) influence decisions.

Bottom-bottom line

People, operating under limited attention and according to varied self-interests, make small choices that—over time—accumulate into broader trends, conjectures, and hype cycles.

Markets and science, responding to these cumulative shifts, sometimes experience sudden paradigm changes or unpredictable events.

Let’s check it out

Say, on the nature of aforementioned programmer jobs - it’s said to enter recession with the amount of jobs dwindling to its lowest level since 1980:

But how’s it looking through the Snowballing changes, Human Tendencies, Inflection points lenses? Or, SHIft happens. Or, Oh SHIft! Sorry, stopped right there.

Snowballing changes

They do accumulate, e.g. the famous dead internet theory as predicted - less people used StackOverflow/Quora ➡️ less quality training data.

Human nature

Here’s where it takes off IMO in conjunction with small changes. While checking out the list of basic human needs and wants, I could conclude several ‘cases’:

Companies freeze hiring to please Investors and/or cut costs and/or get the hype (we’re so AI-native). It disrupts workers’ certainty and safety.

Less Job Positions ➡️ more workload for Programmers + each Position is a lot more lucrative (rising ‘demand’ for working as a programmer, yet supply started shrinking)

More workload (9-5 to 12-6 or, ahem, 8-12-7) ➡️ more stress, more competition, higher burnout and potentially higher attrition rate. This influences control and autonomy.

Junior Job Positions were thought lacking for some time (they are the least ‘capital-efficient’ for companies), even despite the “who’s gonna become seniors if you don’t hire juniors” rhetoric? It undermines growth/significance for market entrants.

Inflection points

Let’s start with the assumption that the success of generative AI was a bit of a paradigm shift. And yeah - using it for programming was one, too.

What’s to happen next?

From this survey

Again, IMO:

Possible Snowballing events look like they’ll happen mostly in offices, social network posts, etc ➡️ e.g. the anti-RTO (Return To Office) trend in over 70% companies now, overemployment tactics, etc.

Gaping conflicts of less job postings/more unemployed + higher turnover ➡️ unclosed Human need for safety ➡️ more rants, more therapy clients due to increased frustration? (always!)

Some predict a crisis - but is this what’s important? Maybe what matters is the influx and gentrification of e.g. trade jobs? Juniors really seems less in demand - new grads now account for just 7% of hires at Big Tech firms—down 25% from 2023.

Regardless of what happens - automation slowly affects and erodes more fields; more low-paying jobs under Damocles ➡️ more instability and unrest in low-income areas? We’re not seeing any signs of moving towards post-scarcity, and it’s unlikely most governments/companies will try doing so.

Looking through the possibility of Inflection points: more automation may happen, or we may arrive at some auto-generating software, or offshoring developers to save money ➡️ that’ll only make the situation ‘worse’, won’t it? PS. Seems to be confirmed real-time by stats - over 10,000 positions reported lost in the Lethe.

Welcome to Teleogenic❣️

Other places I cross-post (not always) to: