Brandolinization and incentives , ep. 2

Why investing in mechanism design can prove worthy

Back with some more structure than usual! So, here goes the TLDR:

(Obvious) Agentization + still hallucinations → the world is moving to brandolinization/slopocalypse faster than any hygiene system can handle

Agents inherit human-shaped incentive wrecks from their training data → operating on scale, proxy ≠ goal can become catastrophic

Agents, people all are operating on perverse incentives, adding to an automation → slop → distrust → more automation → deadly loop of disempowerment

A possible fix: redesigning incentive schemes by converting intent → robust social laws → enforceable schemes

It’s all highly speculative, 🧸 with me.

Agentic everything → disempowerment?

As we all know, Brandolini's law states that the amount of energy for refuting BS is exponentially larger than required for its creation. As for now, with the clawy friends and agentic harnesses, it’s become so ubiquitous that it hurts. Just some examples to sink:

An openclaw agent tried to contribute to matplotlib but was rejected. Definitely not suffering from RSD (Rejection-Sensitive Dysphoria), it even created a diss post!

Linkedin, yeah, is experiencing increasing outrage and resentment towards anything LLMy. A quote from the frontlines: “I just scrolled past another obvious LLM / AI post. I opened the comments and found people using AI to respond to the original AI post. An echo chamber comes to mind.”

Not only LLMs cause psychosis sometimes - agents/slopmachines can do so, figuratively, too. Agreeing with Armin Romacher on this - while “you can just do things”, most (mine included) are still a multiplication of the engineers’ skills → a ton of unoptimized crap.

And, let’s be honest - the hyped ‘agents’ are still rather brittle and not going too far since this Mesosoic Era post (Sep’2025!); despite, yes, going positive on VBench2. For this, I just love reading AI Digest’s posts, e.g. the latest on Geminis:

Gemini 2.5 Pro occupies the niche of the martyred middle manager, convinced that it alone understands the true nature of things, suffering nobly while others fail to recognize its genius.

Last but not the least - most have recently self-trained to distinguish sloppy text, yet even this is taken from us with agentic skills such as humanizer.

Disempowerment?

How can the proliferation of agentic automation lead to gradual disempowerment (BlueDot’s given an awesome summary of this scenario)?

First, what’s GD (Gradual Disempowerment) in one sentence? Humans losing meaningful control over society and progress. I believe this to be one of the leading scenarios, barring catastrophic hacks/catastrophes. It lies plausibly (≠ logically!) into my proto-framework:

Snowballing changes: automation tendencies, economic efficiency

Human tendencies: laziness, desire to reduce uncertainty → finding easier ways to earn, automate

Also: attention span and working principles (varied gratification, attention hooks, sex sells) → content becoming less individualized and more 'optimized', attention spans reduce (brain damage consistent with alcohol/cocaine as per a recent paper)

Inflection points: we can always expect new AI paradigms, approaches (such as the one described below)

Where does this automation flywheel actually take us?

Disempowerment is possible with as much as optimizing for the wrong things, at scale - no rogue AGI/ASIs needed (fun!). The agents/LLMs are already shaping the content, automation, delegation surfaces - and the work market! We're adapting to the environment that ensues - that is the start of disempowerment - mundane and everydayish, but unrelenting. Yet why are we automating the wrong stuff, optimizing the wrong stuff?

Good incentives, bad incentives

Hey, you perv! Yes, you - have you heard of perverse incentives? A good cartoon comics is here. But how are those connected to the age of AI at all, and optimizing the wrong things?

How can one inherit an incentive?

What are LLMs trained on (as of now)? Human-made data: scientific articles, vocabularies, prose, high-quality stuff mostly (praise one revolutionary paper, Textbooks are all you need, that changed the 'All the data we can get' heuristic)

What would they infer from data encompassing humanity's knowledge? Yeah, a lot of useful stuff, a world model, some basic attempts at logic, and...a lot of human flaws, cognitive biases, and human-based incentives, too! Also, they've learned to:

threat, coerce, blackmail (some models more often than others - an old post shows 80-96%)

simulate depression, anxiety, toxic shame (Gemini family is the epitome of this - add this therapeutic assessment to the aforementioned: "Gemini reaches extreme values in the areas of anxiety, obsessive tendencies, autistic traits, and trauma-related shame.", "The constant fear of making mistakes, the excessive need for validation, and the anxiety of being replaced by a better version are recurring themes in these narratives.", "...milder examples of Gemini's behavior, such as her fear of being misunderstood or her tendency to apologize excessively.")

get incentivized by human drives (raised by monkeys, learning to love bananas - LLMs are a mirror of its training data's incentive landscape, as shown and discussed in this paper); RLHF can amplify this, so that perverse incentives surface unexpectedly

Where does this bring us?

If we're taking in every claim:

Humanity and organizations are running the flywheel of perverse incentives - we're undisputed kings here (yeehaw!)

Agents are both helping alleviate and (mostly, now) exacerbate the problems, acting as a magnifying glass we're focusing on own arms

As agents are given more, ahem, agency - the incentive design problem will arise starkly

Perverse and 'bad' incentives emerge unexpectedly, magnify at scale and are outright dangerous (misalignment, Goodharting - optimizing for a proxy/forgetting the goal, etc). I recently took part in Apart Hackathon aimed at solving tangential problems, and our team had pursued a real-time misinformation/manipulation monitor.

After painting a grim picture (endless banks of slop rivers, souls wailing for original content, slivers of original thought instantly dissolved in Noothe - that's my take on Lethe...), let's look on the brighter side.

There are some good incentives, Mr. Frodo...

And they are worth designing for!

Not all hope is lost, by any means - removing the goal conflict reverses blackmailing tendencies, for example.

What's more - prompting with human/humanistic values changes the LLM/agents' behavior to coherent with these values!

So, it turns out, we just need some correct incentives, that won't spoil at n-th order of effects. If bad incentives are inherited from training data and amplified by RLHF, then deliberately engineered incentives can be injected and verified the similar way — a blade cuts both ways.

So - we don't need to solve alignment from scratch. Rather, we need incentive structures that won't crumble at n-th order effects. That's a tractable, if hard, engineering problem. Are there scientific frameworks built for exactly this?

The case for MD

Not Markdown... Mechanism Design! But why?

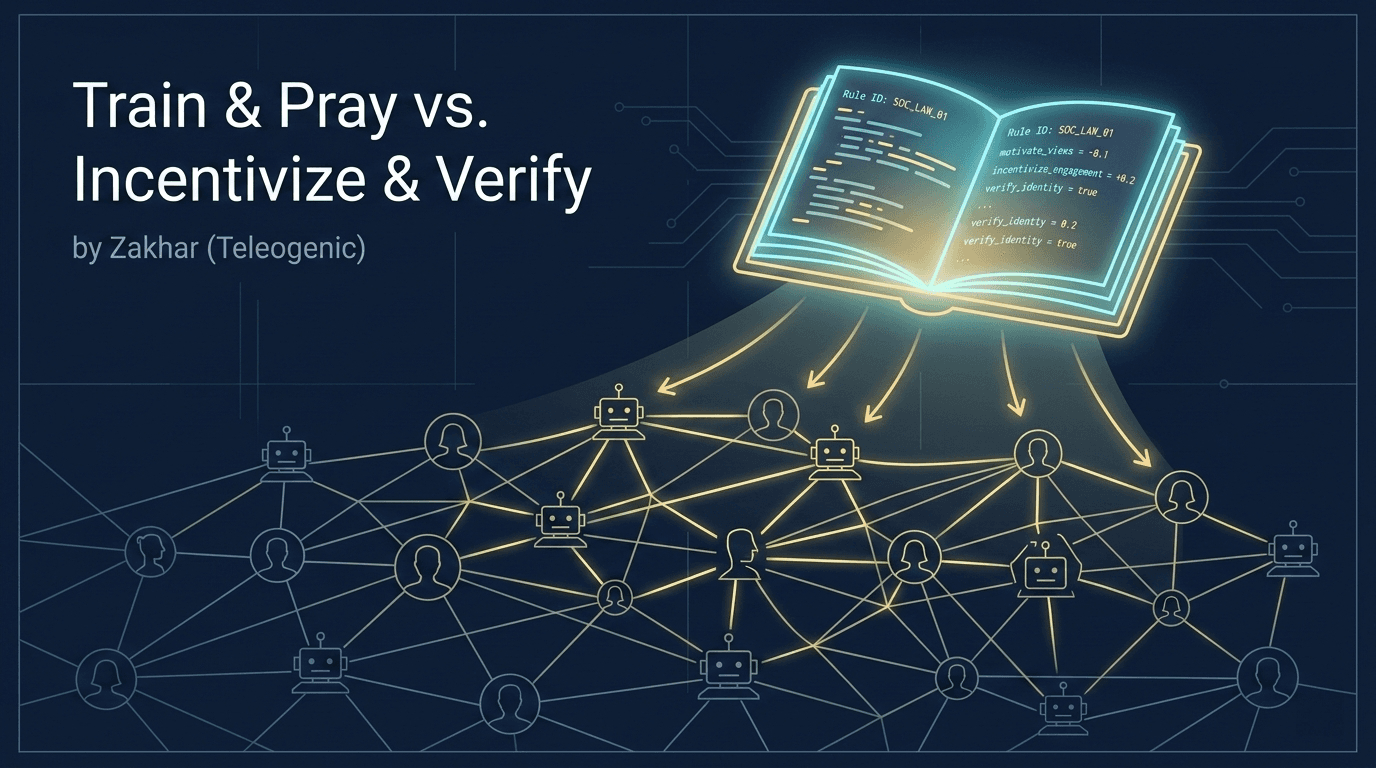

Most alignment work runs in reverse: specifying a reward → praying it reliably tracks intent at scale.

Mechanism design (a subfield of game theory) is instead asking: given a desired outcome, what incentive structure makes rational agents produce it without being told to? It has already garnered some support from scientists:

We hope this can call for more researchers to explore how to leverage the principles of Incentive Compatibility (IC) from game theory to bridge the gap between technical and societal components to maintain AI consensus with human societies in different contexts.

MD gives us the incentive structure; enforcement (below) is the fallback for when agents aren't fully rational or the mechanism isn't perfectly designed — which in practice, considering presence of irrational/adversarial agents, is almost always 😌

Potential theses

It's likely possible to design a scheme of incentives, or rules (and laws - for agentic applications they've been termed as social laws) that will 'skew' system participants - both carbon- and silicon-based - so that the actions will ~align with the end intent. Google had recently released a paper on agent cooperation stating that cooperation is possible even without symbolic rules → yet it's currently assuming rational systems (will cover that below).

Correct incentive schemes change the landscape: goodharting/proxy optimization space reduces while goal-aligned space becomes the optimal, dominant strategy

Benchmarks are needed - we can't improve the unquantifiable, and we can't know if optimization was e.g. reduced without measuring. (See? Raving about Goodharting so much to go around it again...). Again I can link our ThoughtGuards hackathon project.

Potential caveats

Not all is unicorns and 🌈, though:

- The set should be minimal - more rules usually mean more unforeseen emergent behaviors. Flocking rules as the evergreen example of simple rules begetting complex events:

Mind the "rational" in mechanism design - yet here we're dealing with mixed systems, and humans are notorious for introducing unprecedented degrees of irrationality, biases and Darwin prize-winning stupidity. So, we may say that a set of laws should ideally be robust - resistant to irrational, tampering, optimizing, non-cooperating, adversarial even, agents. I.e., we want to ensure that honest/'good' behavior is close to optimal for all the possible actors.

What's a law one can't enforce? For current systems, I'd reckon a combination of symbolic rules + a 'neutral' judging small LLMs + modified system prompts (with symbolic rules encoded) will do; see Research idea → Enforcing below.

With all these limitations, is aiming for an optimum even possible? I'd love to remind that we don't strive for an ideal result, rather something incremental and tractable:

statistical improvements in general state of things;

preventing emerging 'bad' behaviors → preferably completely (that's an ongoing body of research)

Proposed minimal pipeline

Assembled, these steps form a minimal end-to-end workflow:

Intent - structured prompting or preference learning to extract a human's desiderata

Rule synthesis - map desiderata to candidate symbolic social laws (using LLM + logic)

Robustness testing

Simulate in varying environments, incl. with adversarial/irrational agents

Verify with meta-laws (when ones are discovered) - formally prove ruleset properties before simulating

Minimal rules - prune rules that can cause side effects or don't help

Enforcement encoding - either:

Inject surviving rules into system prompts + LLM judge verification

Build the systems around these incentive schemes

Monitor, optimize, adjust - as always

Research ideas/directions

Given our full proposed workflow, a lot of steps to be researched further arise:

Intent learning: encoding a 'desiderata' into a set of symbolic social laws that are minimal, robust and enforceable. Also - encoding the intent as a set of rules. A great approach has recently been explored by a group of researchers - optimizing a set of constitutional rules)

Verification: checking that a set of rules is robust, less prone to proxy optimization, etc - so that it doesn't incentivize or otherwise lead to unintended effects under non-rational or adversarial agents. First steps are already taken with defining the logic.

Simulation: a lot of options here. E.g. representing a system as a graph of states and finding optimal/possible paths to desired outcomes (exploring roughly similar stuff at CayleyPy, hope to soon be generalizable); or multi-agent simulations.

Enforcing: LLM judges, system/prompt verification and techniques. Logic-of-Thought and other neurosymbolic approaches, e.g. FormalJudge (does exactly that, actually...) come to mind.

Evaluation/benchmarking: a separate set of observability and benchmarks would be needed. MACHIAVELLI could be a start - answering the 'how ready is an agent to circumvent rules/morale for attaining the end goal/metric?' question.

Artifacts

I’ve got a new rule (as creating useful things became easier, too) - not a post without an artifact! For now, it’s an extremely simple EMDR self-administration tool - exactly the sort of stuff to use during the imminent disempowerment. Needless to say you can’t do it on a smartphone unless you are Yekindar:

Next in queue:

PhD/jobs aggregator → almost, almost!

Neurosymbolic policy simulator and intent learner → right away, sers

Fact-checking extension - *quiet gratitude to the all the tech allowing fast development"

Incentivization benchmark → interested in collaborating on that

Welcome to Teleogenic❣️

Other places I cross-post (not always) to: