GPU/TPU vs. evolution

Or, hyperthreading at its best. And some news

But first, let’s listen to the ode to touching grass:

Now, to the dull parts.

Paving the landscape

I’ve always thought about the GPU vs. brain computing derby - maybe out of sheer enjoyment of those “Top X of…” articles or comparisons, but still.

Also:

Why there’s so much fuss about wetware computing (mentioned here)

Where we’ll potentially be headed computing- and storage-wise (e.g. new Poxiao drive tech from China - looks great, but let’s see if it’s adopted in real-life)

Why did the LISP machine fail(they’ve been outpaced by microchips on their own ground)Why 42

And other deep theoretical questions, you know.

First, there are some maxims that I’d like to introduce:

🤖 is potentially using a lot less for the ‘infrastructure’ - at the same time, brain seems to only dedicate a small bit (i.e. 10 bps) to high-level thinking

🧠 is using less energy - about 0.0125 kWh compared to RTX 4090’s 0.45 kWh. Otherwise we’d have to get some noice cooling system, boyy! Some are already researching, as well as air inlets as T-Rexes did

Let’s do a comparison on the nature of data transmitted, and the parallelization capabilities.

Data

🤖 conputer: bits comprising numbers and operations. But in the end, it’s binary - 0 or 1.

Quantum computing changes that, yeah-yeah.- in neural networks, we’re definitely operating bigger structures - usually tensors

🧠 brain: at the first sight it’s binary (neuron either activates or not), yet it’s a lot more complex on the second glance (someone even did more back-of-the-napkin calculations)

action potential is slightly ‘more’ than binary: it can be stronger or weaker, at the very least

ion gradients, i.e. the difference between ion concentrations both sides of the ion pump (they influence the activity)

secondary messengers, neurotransmitters, neurosteroids, cytokines, neuron-glia interactions etc. transferring the information inside, between cells and regions. To me, this is the inflection point - those transmit and store a lot of information: whether to activate, how strong to activate, how much to spread, which pathways to induce etc. That can be aptly called a neuron’s metabolome.

A simple example - a single messenger, cAMP, can reach ~40 bits/hour.

synapses seem to hold 4.1-4.7 bits, a neuron has 7,000 synapses, and we have 86,000,000,000 neurons on average

(I know of a SWIM who’s able to write articles with, like, half that!)

Let’s highly theoretically represent any possible neuron’s data dimension x as d vector (metabolome and data), information rate as r vector, state–synapse weighting as a W=[d x s] (synapses) matrix, for neuron i, synapse j:

$$W_j = \left[ d_j \times s_j \right]$$

Individual information per synapse:

$$inf_j := W_j \cdot x$$

A-and the information rate per synapse:

$$I_j = r_j inf_j(x)$$

Finally we arrive at the possible information throughput per neuron:

$$I_i (x) = \sum_{j=1}^s I_j$$

Despite possible math notation fock-ups, that’s still a couple of orders more complex than “it fires or it doesn’t”. For more information, we should cater to computational neuroscience - I’m no real Pinocchio (at least as of now).

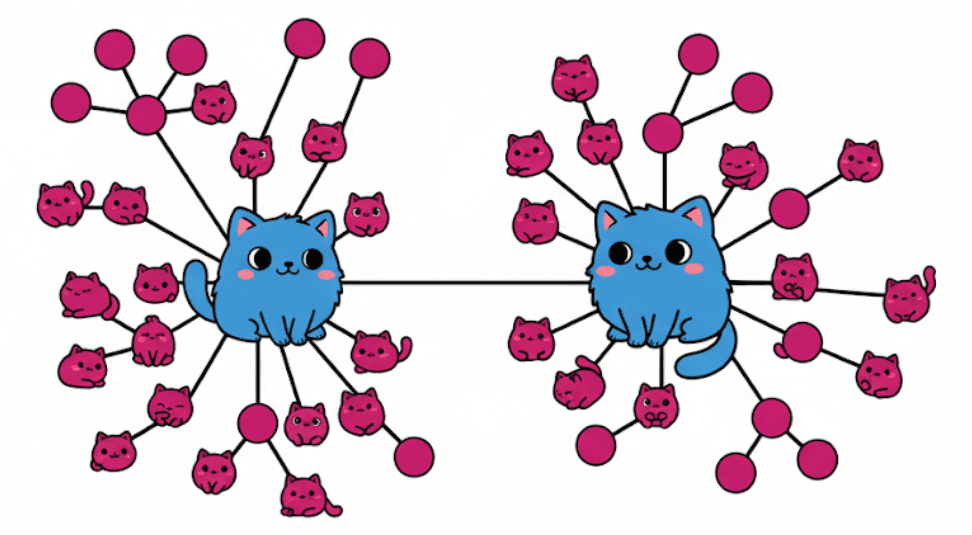

My shabby 5-min drawing skills at it:

Processing

Let’s now consider parallel capabilities.

🤖 The said RTX 4090 has 16,384 cores, each operating on 2.52 GHz. Okay, I didn’t expect these levels… 41,287,680,000,000 or 4.13×10¹³ operations per second.

🧠 Brain has 86 billion * 7,000 cores, each operating a lot slower (up to Hz) - but operating simultaneously, and always carrying the state/metabolome. Each neuron and, by extension, synapse, is operating in parallel to all the others.

TL:DR

🤖:

a lot faster per core, yet synchronous

homogeneous architecture (mostly; we still have differing core types on CPUs and GPUs, right?)

requires less upkeep e.g. on movement, vision, proprioception, etc.

precise in the sense that everything is low-noise, stored and calculated deterministically

even single-bit errors pose problems and require correction

almost no on-chip re-learning, rather static

state/memory is stored separately, needs transmission

🧠:

a lot more parallelizable and fully asynchronous

heterogeneous (neuron/glial cell classes are a thousand and a some - more than 3000 types at the least)

state is higher-dimensional and carries more information, i.e. the information amounts per ‘cycle’ are incomparable. In addition, it’s stored in-cell. Essentially, it’s all in-memory

(Redis afficionados here?)error-tolerant via self-repair and redundant transmission. You can’t lick a badger twice, and you can’t fool a neuron

probabilistic in its nature, possibly quantum (even more states encoded). UPD: looks like tryptophan complexes may indeed elicit quantum effects

on-line learning and adaptation, ‘weights’ updated dynamically all the time

Neuromorphic chips try to mimic the best of brains’ capabilities (namely - parallel processing). And yeah - the reason GPUs and TPUs fare better was precisely the same before optimizations started flowing (more cores working in parallel). More on them a tad later.

One more product I’d love to touch base on is Green Array Chips:

144 separate CPUs

each has built-in RAM and can communicate with adjacent cores

energy mostly consumed during computation

ability to continue the computation after power outage

Looks a bit more like the brain, eh?

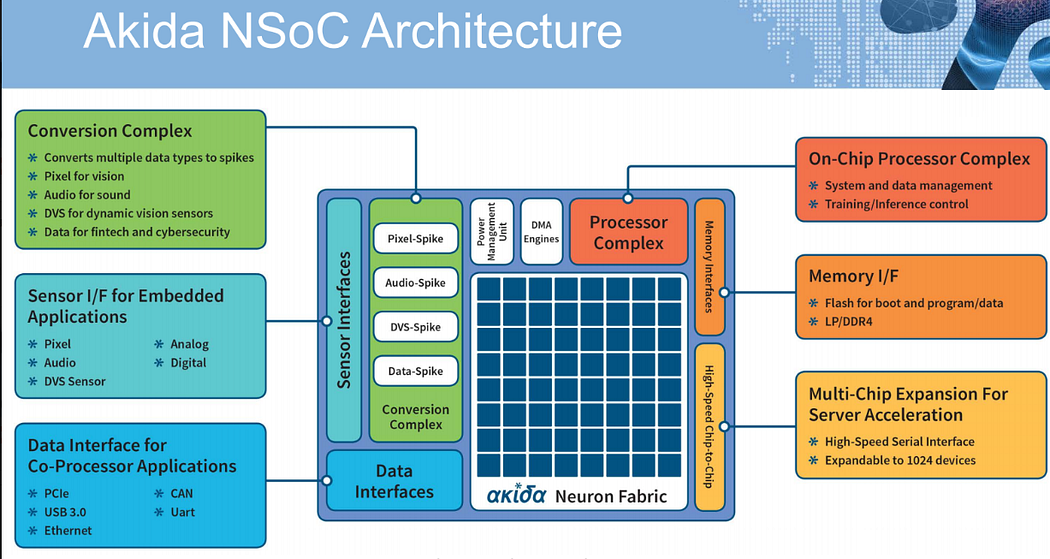

Also, let’s consider the promised land neuromorphic chips, e.g. Brainchip’s Akida:

Is made of ‘spiking neurons’ (1.2 M of them) connected into ‘synapses’, all organized into 80 nodes with 6 MB on-device memory each

Converts data to ‘spikes’, i.e. signals that output activation signals able to propagate further via synapses (figure from here):

Can scale to up to 1,024 chips

They state nodes work independently, but apparently they share the same clock (from their deck)

Can perform 🧠 on-chip learning - i.e. changing weights

So, these look more or less like the brain (hence the neuromorphic, genius)

And that’s my inner mental landscape after trying to understand how virtually anything works:

Strategic conclusion

I hope that was okay for a short’eh differences’ snapshot. As always, strategically the thing that counts remains: which paradigm will be leading in the next 1-2-5-10-30 years?

IMO there are a lot of factors that could influence that:

Research funding and breakthroughs (something becoming real or cheap/reproducible enough)

Legal implications (the legal-illegal/tax-intensive/incentivized spectra)

Big players pushing some paradigm

(or a couple to be safe)to the markets, sometimes because of (2)Some paradigm becoming or staying a dead-end (e.g. because (1) not yet happened)

News and viz’s

Conputer.

Banana.

Interested in how many people had slipped on banana peels throughout history? Here’s an investigation.

Music

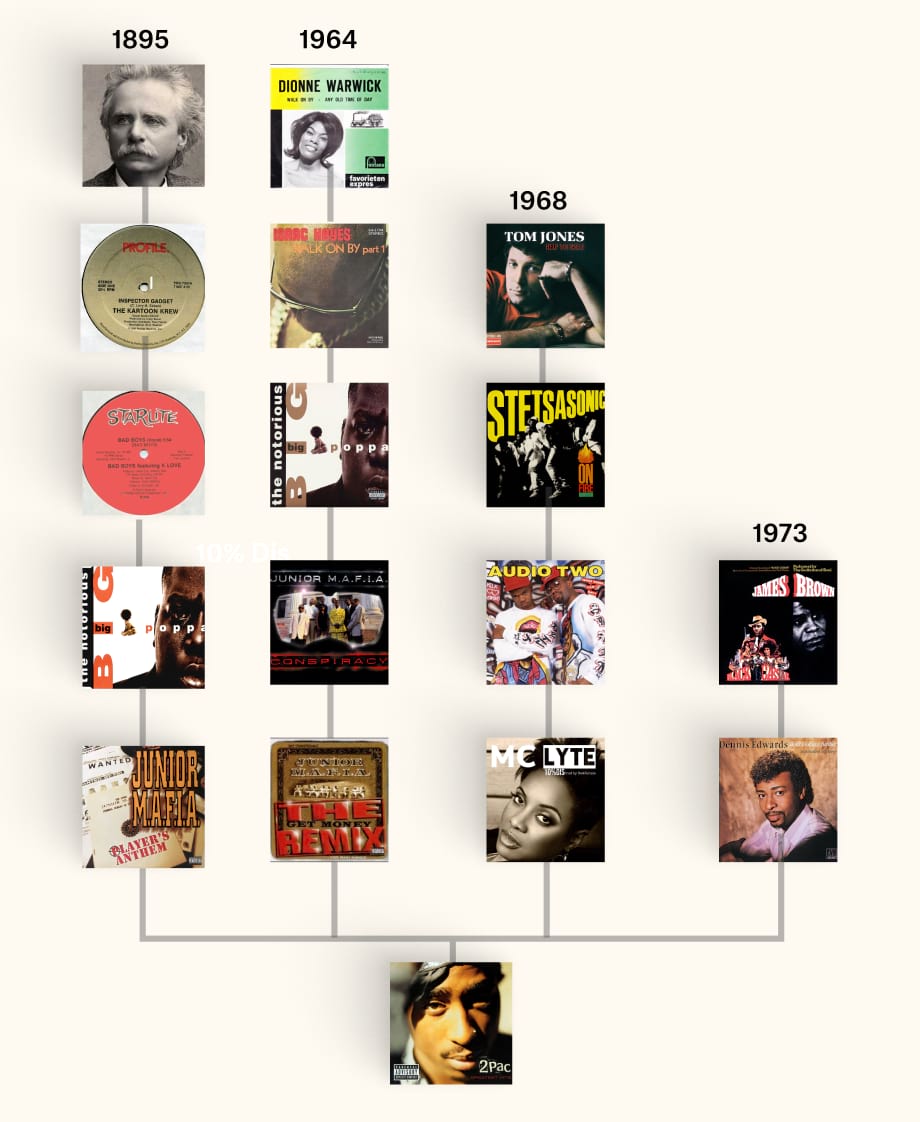

Pudding again at it - a pretty awesome insight on how melodies and sounds themselves are plagiarized inherited?

Best-ial wines

Another awesome Pudding viz - which animals correlate with higher wine ratings.

TLDR: choose fish and bugs, don’t choose lizards.

Aging like shitty fine wine (movies)

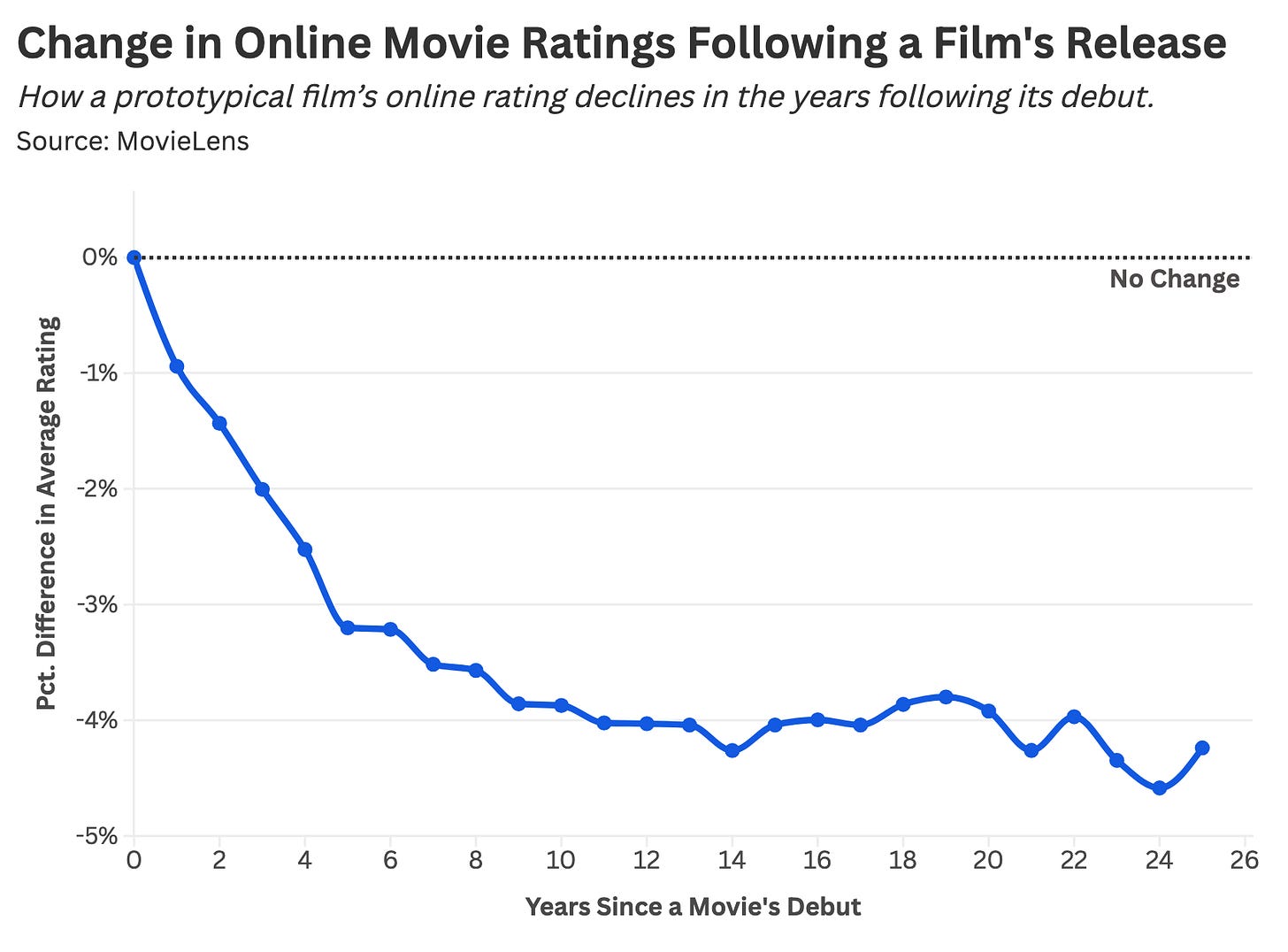

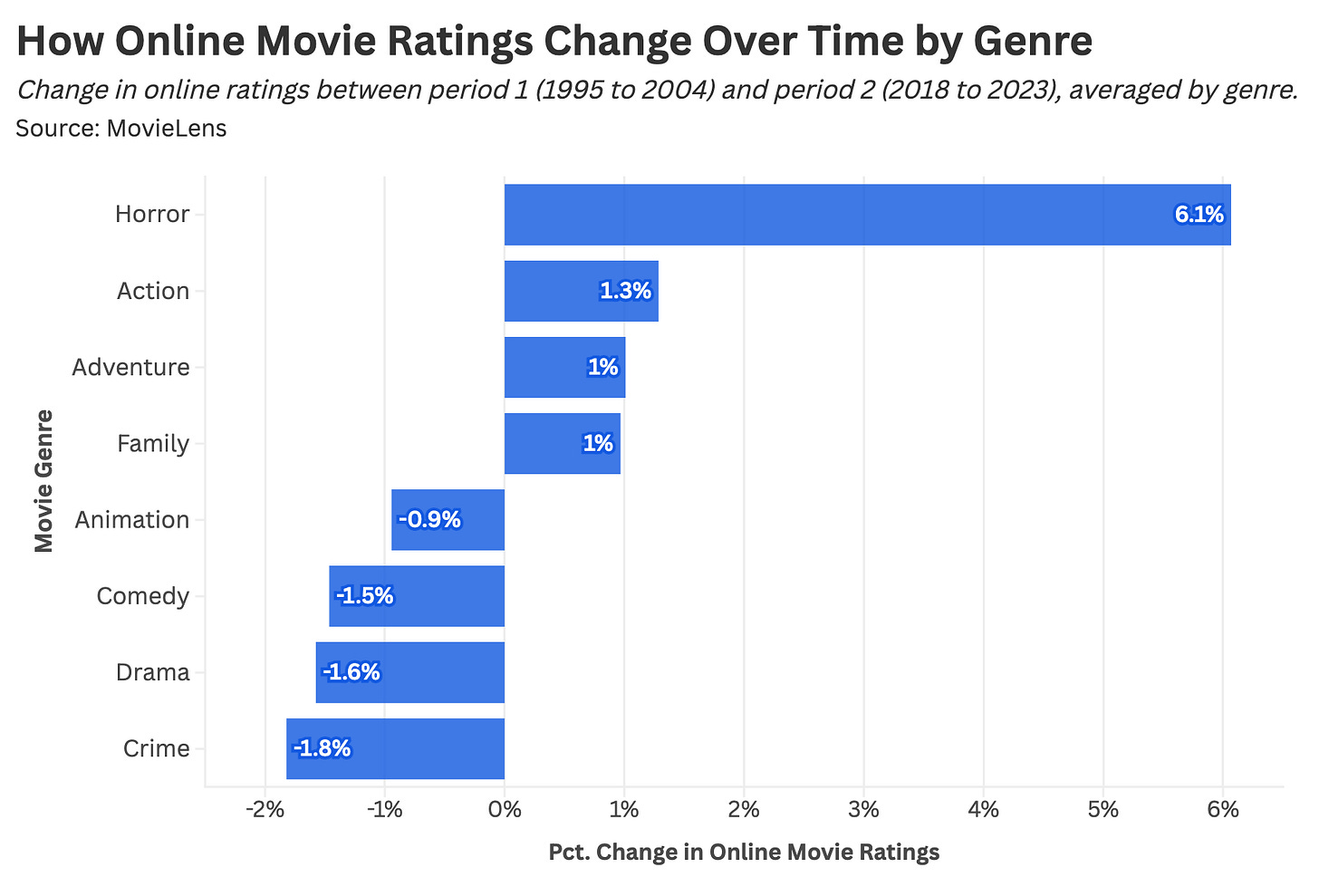

An exploration of how different movies age, judged by ratings in two time periods: 1995-2003 and 2018-2023.

TLDR: movies age bad on average; some genres, like horror, age better (below).

Think twice about animals at all…

They’ve got a weird (!) effect on life satisfaction (1) - slightly to the south.

However, as authors mention, that may root in reverse causation: people get pets to deal with loneliness and dissatisfaction.

Welcome to Teleogenic❣️

Other places I cross-post (not always) to: